3f277c18dee8a6470cdda8a397ce71f56dabdc9e,Losses.py,,loss_HardNet,#,15

Before Change

elif batch_reduce == "average":

min_neg = torch.mean(dist_without_min_on_diag,1)[0]

if anchor_swap:

min_neg2 = torch.t(torch.mean(dist_without_min_on_diag,0)[0])

min_neg = torch.min(min_neg,min_neg2)

min_neg = torch.t(min_neg).squeeze(0)

elif batch_reduce == "random":

idxs = torch.randperm(anchor.size()[0]).long()

min_neg = dist_without_min_on_diag.gather(1,idxs.view(-1,1))

if anchor_swap:

min_neg2 = torch.t(dist_without_min_on_diag.gather(0,idxs.view(-1,1)))

min_neg = torch.min(min_neg,min_neg2)

min_neg = torch.t(min_neg).squeeze(0)

else:

print ("Unknown batch reduce mode. Try min, average or random")

sys.exit(1)

if loss_type == "triplet_margin":

loss = torch.clamp(margin + pos - min_neg, min=0.0)

elif loss_type == "softmax":

exp_pos = torch.exp(2.0 - pos);

exp_den = exp_pos + torch.exp(2.0 - min_neg) + eps;

loss = - torch.log( exp_pos / exp_den )

elif loss_type == "contrastive":

loss = torch.clamp(margin - min_neg, min=0.0) + pos;

else:

After Change

elif batch_reduce == "average":

min_neg = torch.mean(dist_without_min_on_diag,1)

if anchor_swap:

min_neg2 = torch.t(torch.mean(dist_without_min_on_diag,0))

min_neg = torch.min(min_neg,min_neg2)

min_neg = torch.t(min_neg).squeeze(0)

elif batch_reduce == "random":

idxs = torch.autograd.Variable(torch.randperm(anchor.size()[0]).long()).cuda()

min_neg = dist_without_min_on_diag.gather(1,idxs.view(-1,1))

if anchor_swap:

min_neg2 = torch.t(dist_without_min_on_diag).gather(1,idxs.view(-1,1))

min_neg = torch.min(min_neg,min_neg2)

min_neg = torch.t(min_neg).squeeze(0)

else:

print ("Unknown batch reduce mode. Try min, average or random")

sys.exit(1)

if loss_type == "triplet_margin":

loss = torch.clamp(margin + pos - min_neg, min=0.0)

elif loss_type == "softmax":

exp_pos = torch.exp(2.0 - pos);

exp_den = exp_pos + torch.exp(2.0 - min_neg) + eps;

loss = - torch.log( exp_pos / exp_den )

elif loss_type == "contrastive":

loss = torch.clamp(margin - min_neg, min=0.0) + pos;

else:

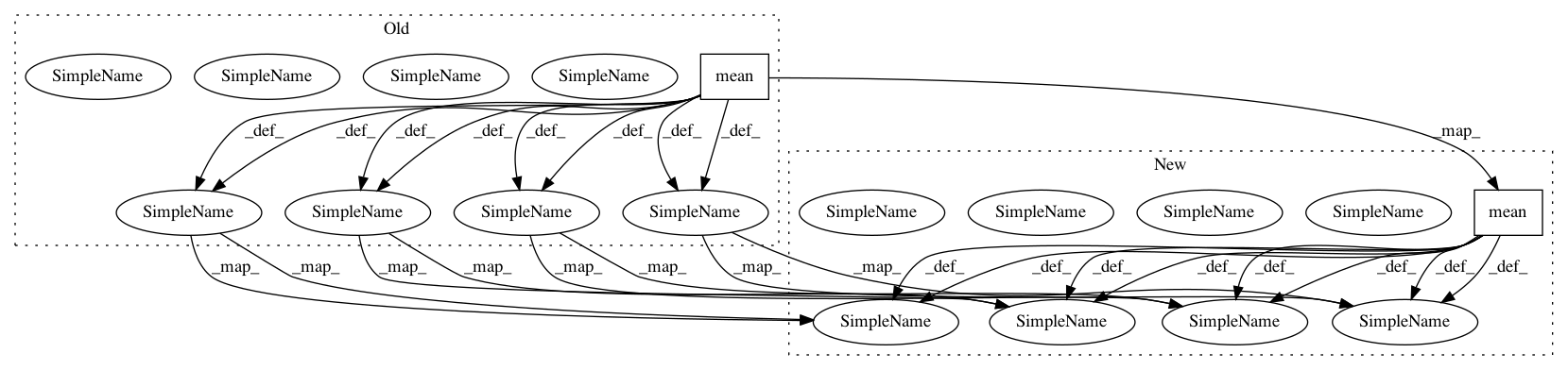

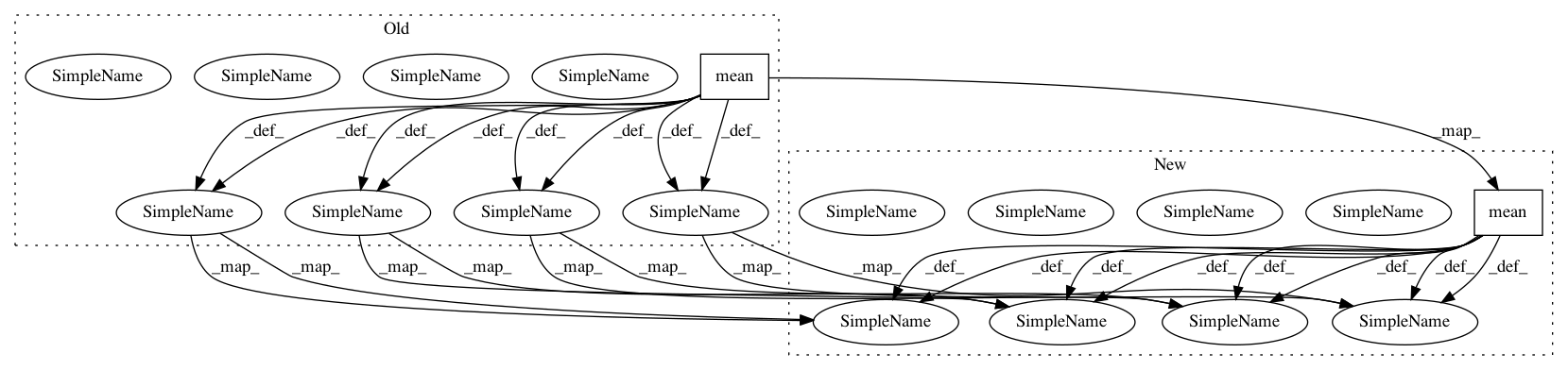

In pattern: SUPERPATTERN

Frequency: 3

Non-data size: 2

Instances

Project Name: DagnyT/hardnet

Commit Name: 3f277c18dee8a6470cdda8a397ce71f56dabdc9e

Time: 2017-07-25

Author: ducha.aiki@gmail.com

File Name: Losses.py

Class Name:

Method Name: loss_HardNet

Project Name: mariogeiger/se3cnn

Commit Name: dbd087f55b79484532451d2754dcf10d60564fc3

Time: 2018-10-18

Author: michal.tyszkiewicz@gmail.com

File Name: se3cnn/batchnorm.py

Class Name: SE3BatchNorm

Method Name: forward

Project Name: DagnyT/hardnet

Commit Name: 3f277c18dee8a6470cdda8a397ce71f56dabdc9e

Time: 2017-07-25

Author: ducha.aiki@gmail.com

File Name: Losses.py

Class Name:

Method Name: loss_HardNet