066fda55de8b2a65d57b6201d5b37daa6f7f399d,catalyst/rl/onpolicy/algorithms/ppo.py,PPO,get_rollout,#PPO#,30

Before Change

rewards = np.array(rewards)[:trajectory_len]

values = torch.zeros((states.shape[0] + 1, 1)).to(self._device)

values[:states.shape[0]] = self.critic(states)

values = values.cpu().numpy().reshape(-1)[:trajectory_len+1]

_, logprobs = self.actor(states, logprob=actions)

logprobs = logprobs.cpu().numpy().reshape(-1)[:trajectory_len]

deltas = rewards + self.gamma * values[1:] - values[:-1]

advantages = geometric_cumsum(self.gamma, deltas)[0]

returns = geometric_cumsum(self.gamma * self.gae_lambda, rewards)[0]

rollout = {

"return": returns,

"value": values[:trajectory_len],

"advantage": advantages,

After Change

}

@torch.no_grad()

def get_rollout(self, states, actions, rewards, dones):

trajectory_len = \

rewards.shape[0] if dones[-1] else rewards.shape[0] - 1

states = self._to_tensor(states)

actions = self._to_tensor(actions)

rewards = np.array(rewards)[:trajectory_len]

values = torch.zeros((states.shape[0] + 1, self._num_heads)).\

to(self._device)

values[:states.shape[0], :] = self.critic(states).squeeze(-1)

// Each column corresponds to a different gamma

values = values.cpu().numpy()[:trajectory_len+1, :]

_, logprobs = self.actor(states, logprob=actions)

logprobs = logprobs.cpu().numpy().reshape(-1)[:trajectory_len]

deltas = rewards[:, None] + self._gammas * values[1:] - values[:-1]

// len x num_heads

// For each gamma in the list of gammas compute the

// advantage and returns

advantages = np.stack([

geometric_cumsum(gamma, deltas[:, i])[0]

for i, gamma in enumerate(self._gammas)

], axis=1) // len x num_heads

returns = np.stack([

geometric_cumsum(gamma * self.gae_lambda, rewards)[0]

for gamma in self._gammas

], axis=1) // len x num_heads

rollout = {

"return": returns,

"value": values[:trajectory_len],

"advantage": advantages,

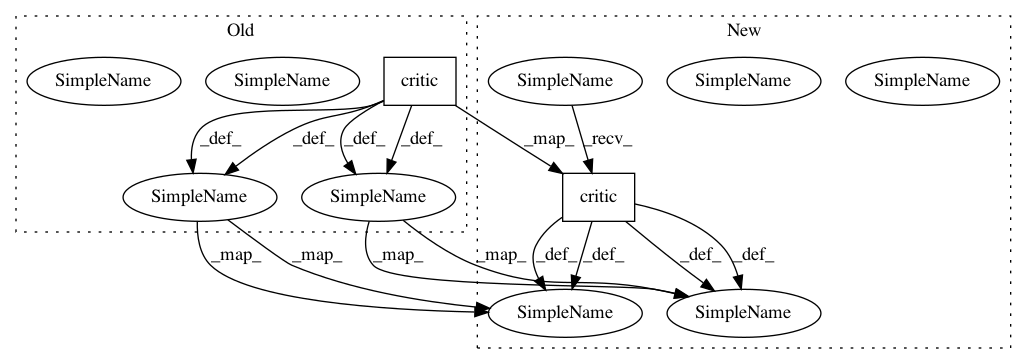

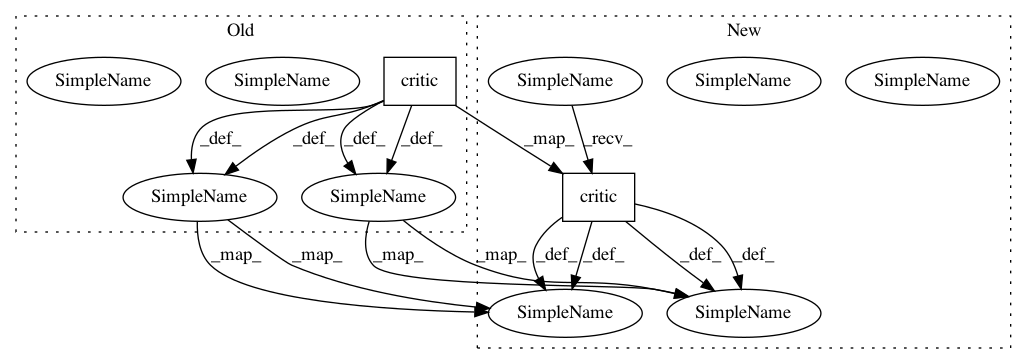

In pattern: SUPERPATTERN

Frequency: 3

Non-data size: 2

Instances

Project Name: catalyst-team/catalyst

Commit Name: 066fda55de8b2a65d57b6201d5b37daa6f7f399d

Time: 2019-06-10

Author: khrulkov.v@gmail.com

File Name: catalyst/rl/onpolicy/algorithms/ppo.py

Class Name: PPO

Method Name: get_rollout

Project Name: catalyst-team/catalyst

Commit Name: c27dbde9ccec2920f3825538aff07e8533e086ba

Time: 2019-07-24

Author: scitator@gmail.com

File Name: catalyst/rl/offpolicy/algorithms/dqn.py

Class Name: DQN

Method Name: _categorical_loss

Project Name: catalyst-team/catalyst

Commit Name: c27dbde9ccec2920f3825538aff07e8533e086ba

Time: 2019-07-24

Author: scitator@gmail.com

File Name: catalyst/rl/offpolicy/algorithms/dqn.py

Class Name: DQN

Method Name: _quantile_loss

Project Name: catalyst-team/catalyst

Commit Name: 066fda55de8b2a65d57b6201d5b37daa6f7f399d

Time: 2019-06-10

Author: khrulkov.v@gmail.com

File Name: catalyst/rl/onpolicy/algorithms/ppo.py

Class Name: PPO

Method Name: get_rollout