641a28fbf0daff0ad1ad0f43d2c4b545cb6f9656,examples/reinforcement_learning/tutorial_cartpole_ac.py,Actor,choose_action,#Actor#,110

Before Change

return exp_v

def choose_action(self, s):

probs = self.sess.run(self.acts_prob, {self.s: [s]}) // get probabilities of all actions

return tl.rein.choice_action_by_probs(probs.ravel())

def choose_action_greedy(self, s):

After Change

def choose_action(self, s):

// probs = self.sess.run(self.acts_prob, {self.s: [s]}) // get probabilities of all actions

_logits = self.model([s]).outputs

_probs = tf.nn.softmax(_logits).numpy()

return tl.rein.choice_action_by_probs(_probs.ravel())

def choose_action_greedy(self, s):

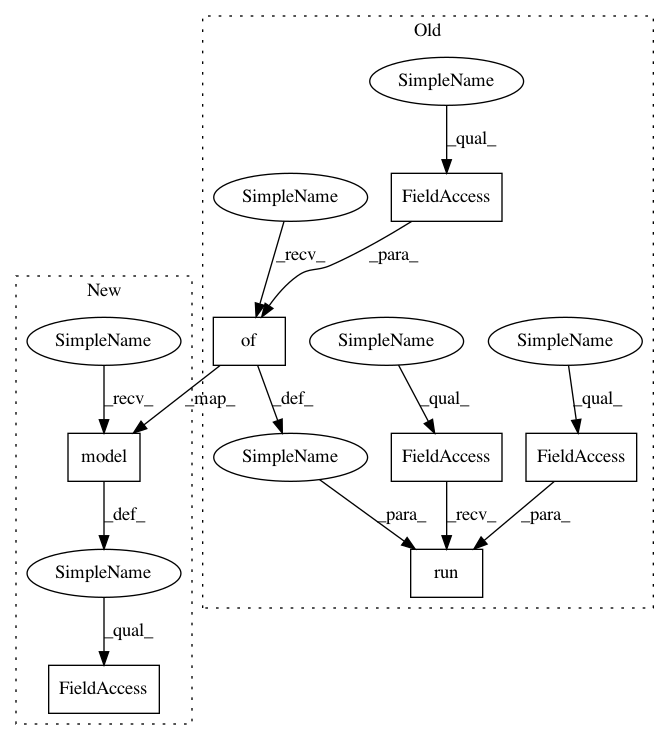

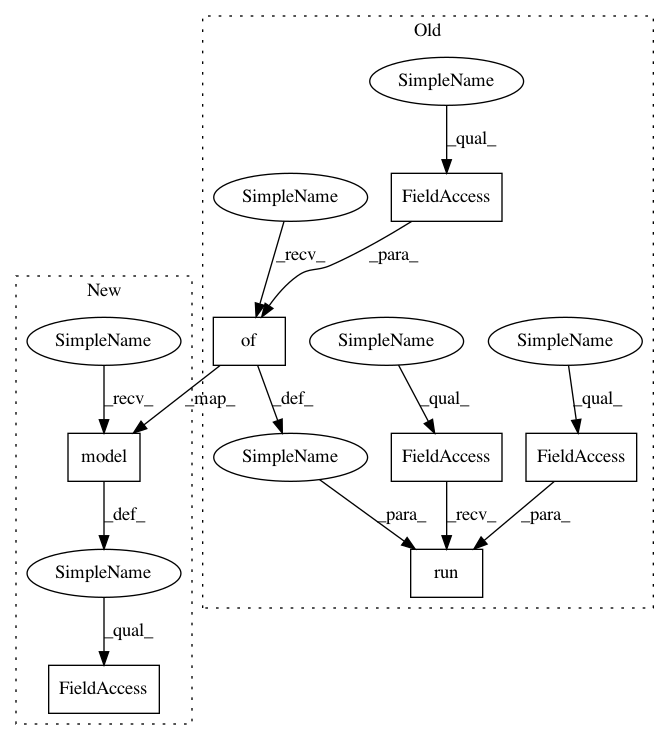

In pattern: SUPERPATTERN

Frequency: 3

Non-data size: 7

Instances

Project Name: tensorlayer/tensorlayer

Commit Name: 641a28fbf0daff0ad1ad0f43d2c4b545cb6f9656

Time: 2019-02-16

Author: dhsig552@163.com

File Name: examples/reinforcement_learning/tutorial_cartpole_ac.py

Class Name: Actor

Method Name: choose_action

Project Name: tensorlayer/tensorlayer

Commit Name: 641a28fbf0daff0ad1ad0f43d2c4b545cb6f9656

Time: 2019-02-16

Author: dhsig552@163.com

File Name: examples/reinforcement_learning/tutorial_cartpole_ac.py

Class Name: Critic

Method Name: learn

Project Name: tensorlayer/tensorlayer

Commit Name: 641a28fbf0daff0ad1ad0f43d2c4b545cb6f9656

Time: 2019-02-16

Author: dhsig552@163.com

File Name: examples/reinforcement_learning/tutorial_cartpole_ac.py

Class Name: Actor

Method Name: choose_action_greedy